Abstract

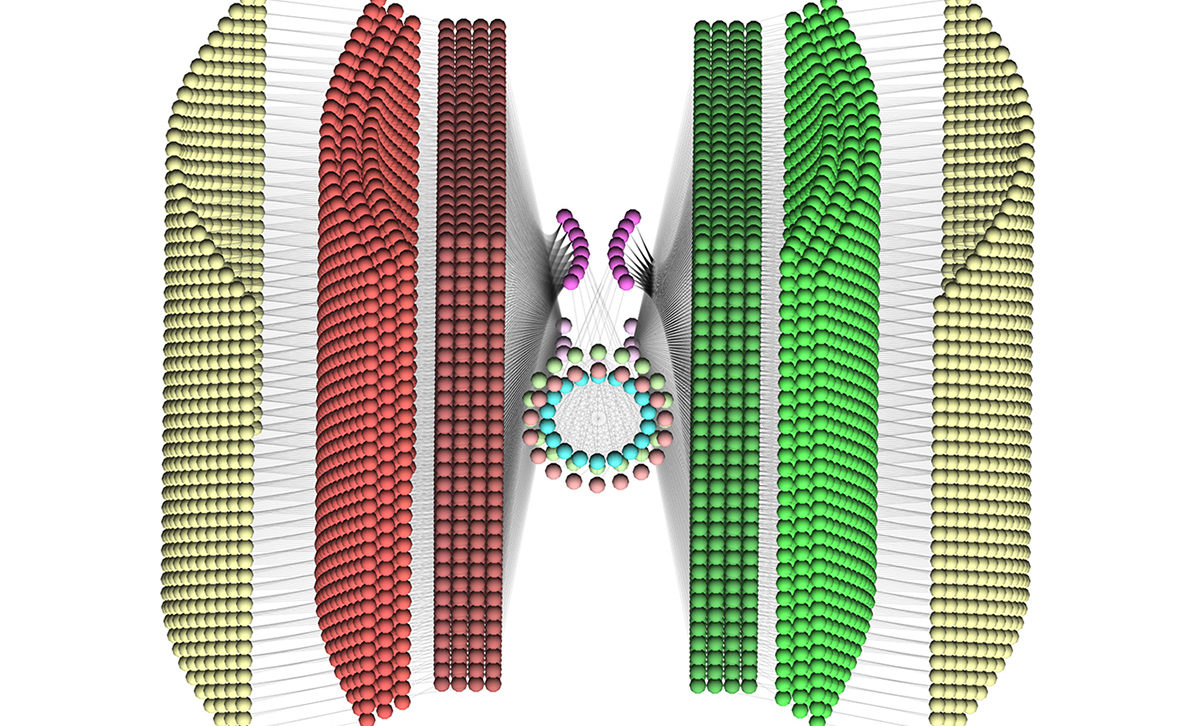

The insect central complex is an enigmatic structure, whose computational function has evaded inquiry, but which has been implicated in a wide range of behaviours. Recent experimental evidence from the fruit fly (Drosophila melanogaster) and the cockroach (Blaberus discoidalis) has demonstrated the existence of neural activity corresponding to the animal’s orientation within a virtual arena, and thus a first insight into one component of this structure. This experimental evidence shows key features of the neural activity: an offset between the angle represented by the neural activity and the true position of visual features in the arena, and the remapping of a visual arena that wraps around at +/-135° onto an entire circle of neurons in the central complex. Here we present a computational model which can reproduce this experimental evidence in detail, and predicts the computational mechanisms that underlie the data. We predict that both the offset and remapping of the fly’s orientation can be explained by plasticity of the synaptic weights between the visual receptive fields and the neurons representing orientation. Furthermore, we predict that this learning is reliant on the existence of neural pathways that detect rotational motion, and uses this rotation to drive the rotation of activity in a neural ring attractor. Our model also reproduces the `transitioning’ between visual landmarks seen when rotationally symmetric landmarks are presented. This model can provide the basis for further investigation into the role of the central complex, which promises to be a key structure for understanding insect behaviour, as well as suggesting approaches towards creating fully autonomous robotic agents.

Video of the model in action can be found on YouTube:

https://www.youtube.com/watch?v=qRafuME5wfU

Resources

The central complex model presented here is described in the PLOS One paper:

A. Cope, C. Sabo, E. Vasilaki, A. B. Barron, and J. A. R. Marshall (2017), “A Computational Model of the Integration of Landmarks and Motion in the Insect Central Complex,” PLoS One, Feb. 27. doi: 10.1371/journal.pone.0172325

The model along with the data used to create the Figures and the analysis scripts used can be found on Github: