What if we could design an autonomous flying robot with the navigational and learning abilities of a honeybee? Such a computationally and energy-efficient autonomous robot would represent a step-change in robotics technology and is precisely what the ‘Brains on Board’ project aims to achieve.

RESEARCH

Autonomous control of mobile robots requires robustness to environmental and sensory uncertainty and also need the flexibility to deal with novel environments and scenarios. Animals solve these problems through having flexible brains capable of unsupervised pattern detection and learning. Behavioural biologists and neuroscientists are increasingly realising that ‘small’-brained animals such as insects have extremely rich behavioural repertoires. The honeybee, an extremely well-studied animal with a brain of only 1 million neurons, exhibits sophisticated learning and navigation abilities through highly efficient neural processes. Bees can reliably navigate over several kilometres in 3-dimensional space, learning the features that will enable them to return to their nest. They can optimise

the distances travelled on routes from the nest site to multiple forage patches, almost certainly without possessing a mental map. Furthermore, bees’ brains can multi-task, are highly adaptable to completely novel scenarios, and exhibit extremely rapid learning. This is in marked contrast to typical control engineering solutions and AI, including deep learning. Bee brains thus provide an excellent autonomous system to reverse engineer, far more sophisticated in navigation and learning abilities than those of Drosophila and other flies. Yet, their brains are still of a size that systematic investigation and modelling remain practical, in contrast to the much larger vertebrate brains of rats, cats, and primates.

OBJECTIVES

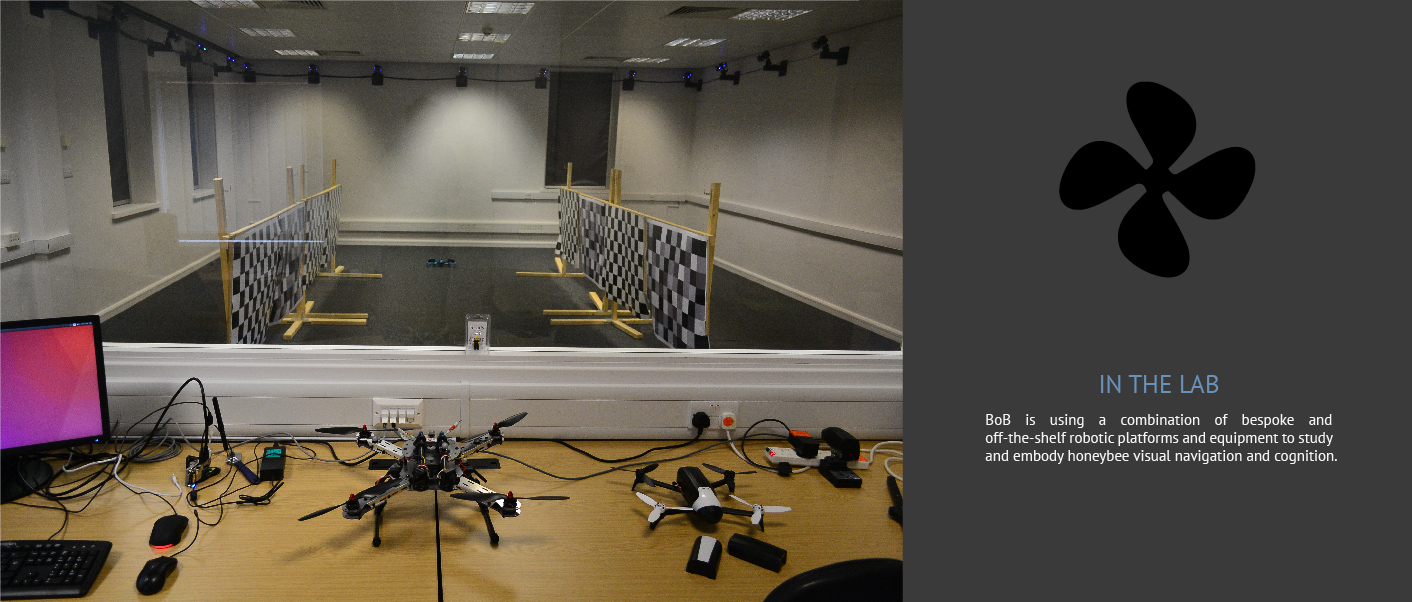

The “Brains on Board” Programme Grant will fuse computational and experimental neuroscience to develop a ground-breaking new class of highly efficient ‘brain on board’ robot controllers able to exhibit adaptive behaviour while running on powerful yet lightweight General-Purpose Graphics Processing Unit hardware now emerging for the mobile devices market. This will be demonstrated via autonomous and adaptive control of a flying robot, using an on-board computational simulation of the bee’s neural circuits; an unprecedented achievement in robotics technology.

The research objectives of the project are to:

Use the honeybees’ minibrain to advance our understanding of the computational bases of navigation and action selection.

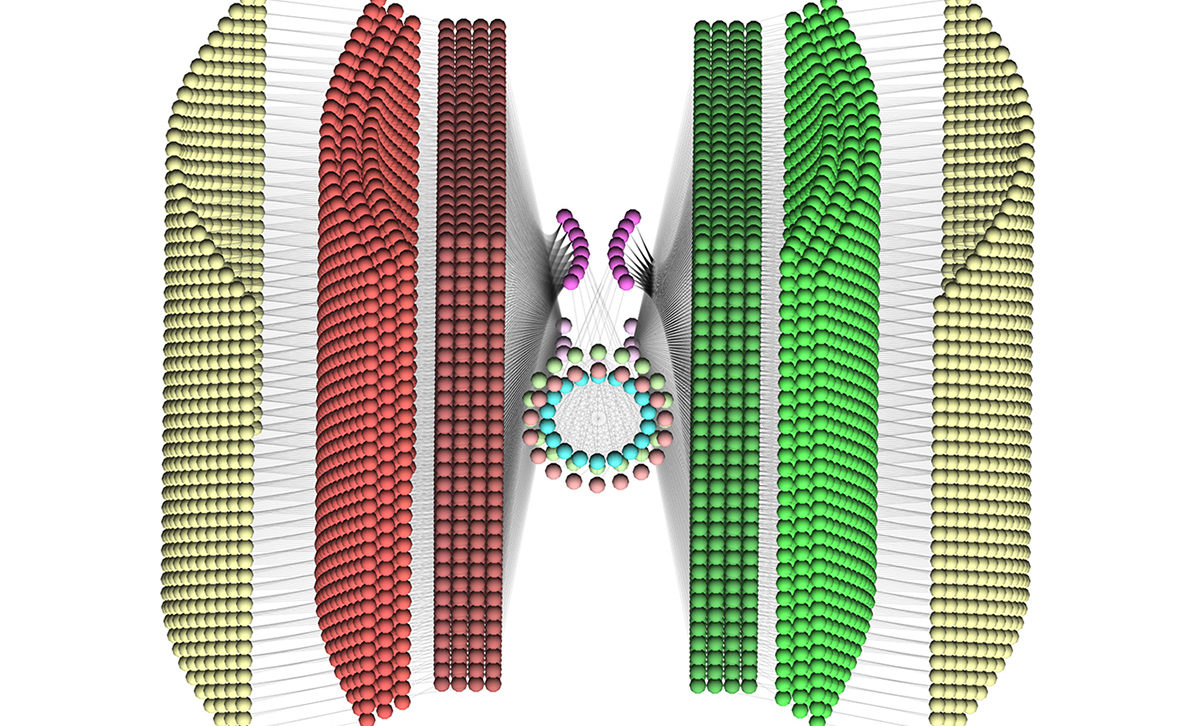

Reproduce these behaviours in minimal neural models running on computational hardware in virtual environments.

Develop neural modelling and hardware tools and techniques to a level at which real-time large-scale neural simulation on low-power, low-weight, high-throughput processor technology is feasible.

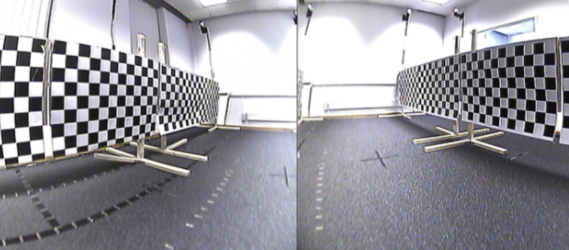

Develop flying robot platforms that replicate the sensory capabilities and flight dynamics of the honeybee to a high level of fidelity.

Deploy results from objectives 1 to 4 to develop and test on-board autonomous real-time controllers for flying robots.

IMPACT

The convergence of mobile supercomputing processor design, advances in neural and behavioural recordings, and novel bio-inspired learning algorithms presents a unique opportunity to make fundamental advances across a broad front. “Brains on Board” will deliver substantial impact, from basic understanding of brains to development of robotics and autonomous systems technologies which will establish a clear lead for UK science, engineering, and technology in these areas. As well as basic research advances, these developments will lead to new technological and economic opportunities in robotics and artificial intelligence, computational intelligence, insect neuroscience, and the neuroscience community in general.