Abstract

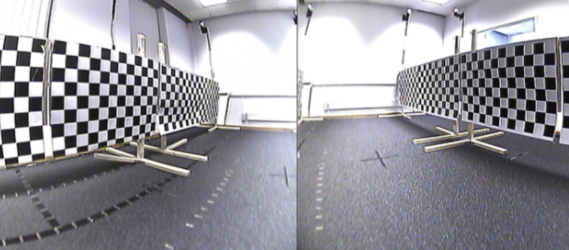

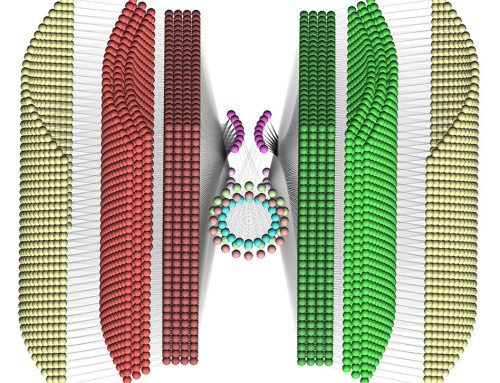

Designing hardware for miniaturized robotics which mimics the capabilities of flying insects is of interest, because they share similar constraints (i.e. small size, low weight, and low energy consumption). Research in this area aims to enable robots with similarly efficient flight and cognitive abilities. Visual processing is important to flying insects’ impressive flight capabilities, but currently, embodiment of insect-like visual systems is limited by the hardware systems available. Suitable hardware is either prohibitively expensive, difficult to reproduce, cannot accurately simulate insect vision characteristics, and/or is too heavy for small robotic platforms. These limitations hamper the development of platforms for embodiment which in turn hampers the progress on understanding of how biological systems fundamentally works. To address this gap, this paper proposes an inexpensive, lightweight robotic system for modelling insect vision. The system is mounted and tested on a robotic platform for mobile applications, and then the camera and insect vision models are evaluated. We analyse the potential of the system for use in embodiment of higher-level visual processes (i.e. motion detection) and also for development of navigation based on vision for robotics in general. Optic flow from sample camera data is calculated and compared to a perfect, simulated bee world showing an excellent resemblance.

Resources

The visual system described here can be found detailed in the ASD paper:

- Sabo, R. Chisholm, A. Petterson, and A. Cope, “Inexpensive, Lightweight Robotics for Insect Vision,” Journal of Arthropod Structure and Development, Arthropods as Biological Models for Robotics: From Insects to Robots, August 2017. doi: 10.1016/j.asd.2017.08.001

The code used in the implementation can be found on Github: